SEO Revisit: What Actually Changed After 4 Weeks

Why this follow-up exists

Four weeks ago I wrote about how a simple infrastructure change escalated into an SEO rabbit hole - and, as I know next to nothing about SEO, eventually into an AI rabbit hole.

The trigger was a migration away from Azure Front Door that resulted in a series of unexpected SEO discoveries while I was rebuilding the deployment pipeline.

To start over, here’s the original article: From Azure Front Door Back to Zero Cost – Migrating to Azure Static Web Apps

That article deliberately ended without conclusions because at the time, there was no measurable data.

This post closes that loop by interpreting my current Google Search Console data.

All explanations and interpretations may be AI-infused.

The baseline and the experiment setup

Before any SEO-related changes the blog was technically indexed but almost invisible.

Impressions existed, but clicks were essentially zero and most visibility came from name-based queries rather than topic-driven searches.

That matters because without a baseline, any later movement becomes anecdotal.

What was changed intentionally

The changes themselves were deliberately small and controlled.

I added JSON-LD snippets, reworked keywords, cleaned up sitemaps. Titles were rewritten to be explicit rather than clever. I changed meta descriptions to set expectations rather than summarize content and aligned headings more closely with search intent.

Also, I added internal links, where articles were clearly related. Examples include linking articles about automation and infrastructure such as:

- Automated Hexo build with GitHub Actions

- Deploy static files to Azure Storage with GitHub Actions

- Emojis, collations and prepared statements - A new path to encoding hell

What I explicitly did not do: No new content was published specifically for SEO and no external traffic sources were introduced.

The goal was not aggressive optimization but a controlled experiment.

Google Search Console after four weeks

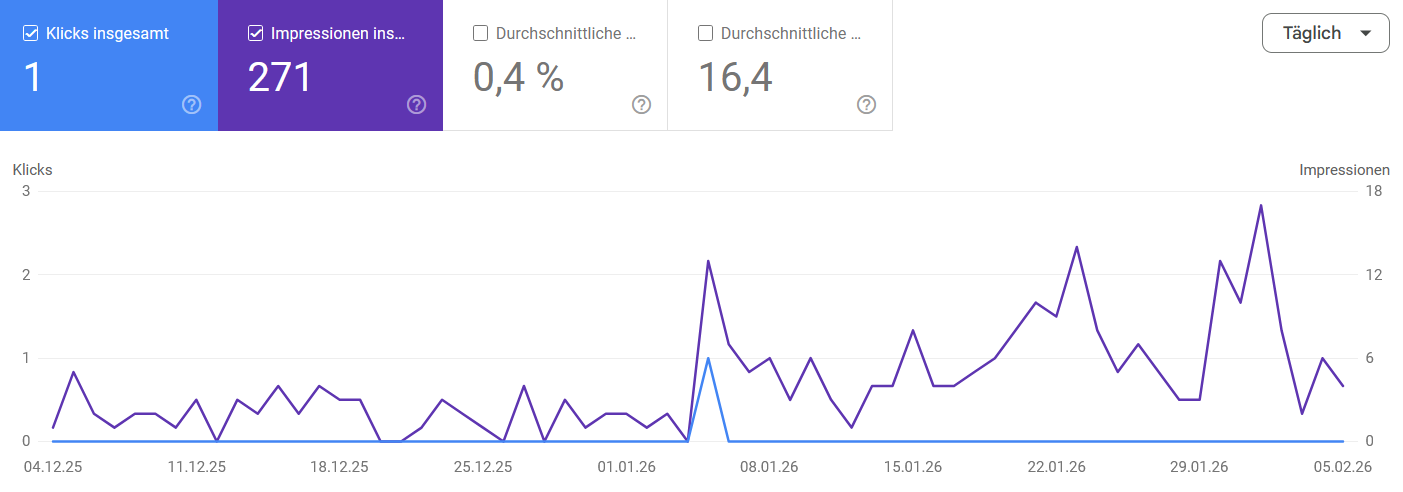

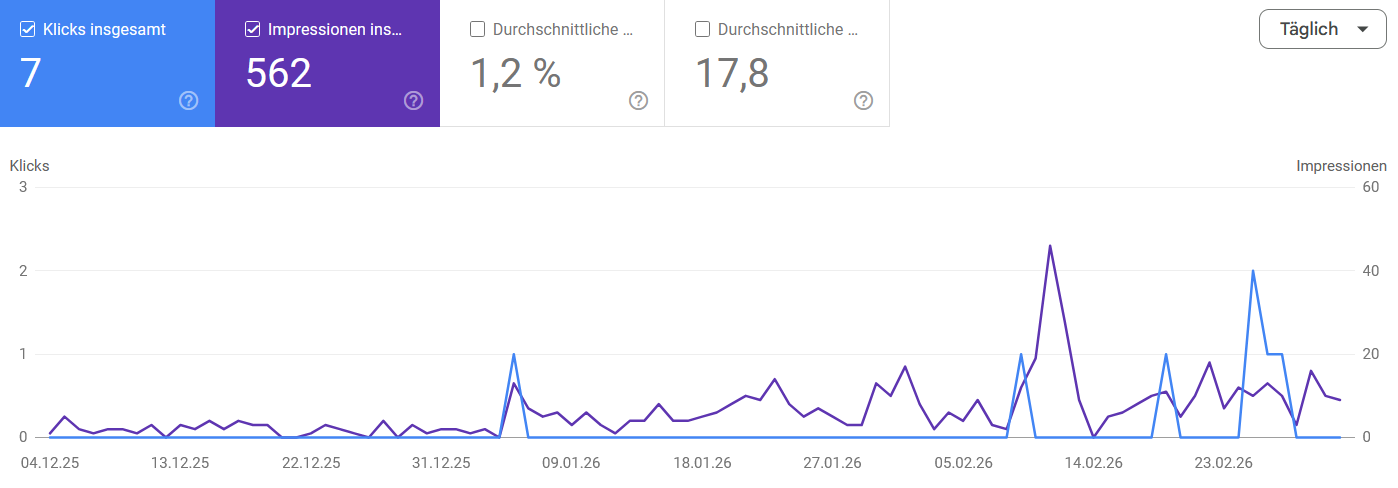

After four weeks, Google Search Console should have accumulated enough data to show some movement beyond noise.

At this stage impressions matter more than clicks, because increased visibility is a prerequisite for traffic. That’s at least what AI told me.

Compared to the previous period this represents roughly a doubling of impressions and a noticeable increase in clicks.

Key numbers

During the observation window the blog generated roughly:

- 562 impressions

- 7 clicks

- CTR around 1.2%

- average position around 17.8

At first glance a CTR around one percent might look disappointing.

However, positions around the middle of the second search results page rarely generate meaningful click-through rates.

In other words:

Visibility increased before traffic did, which is the expected sequence for a small site.

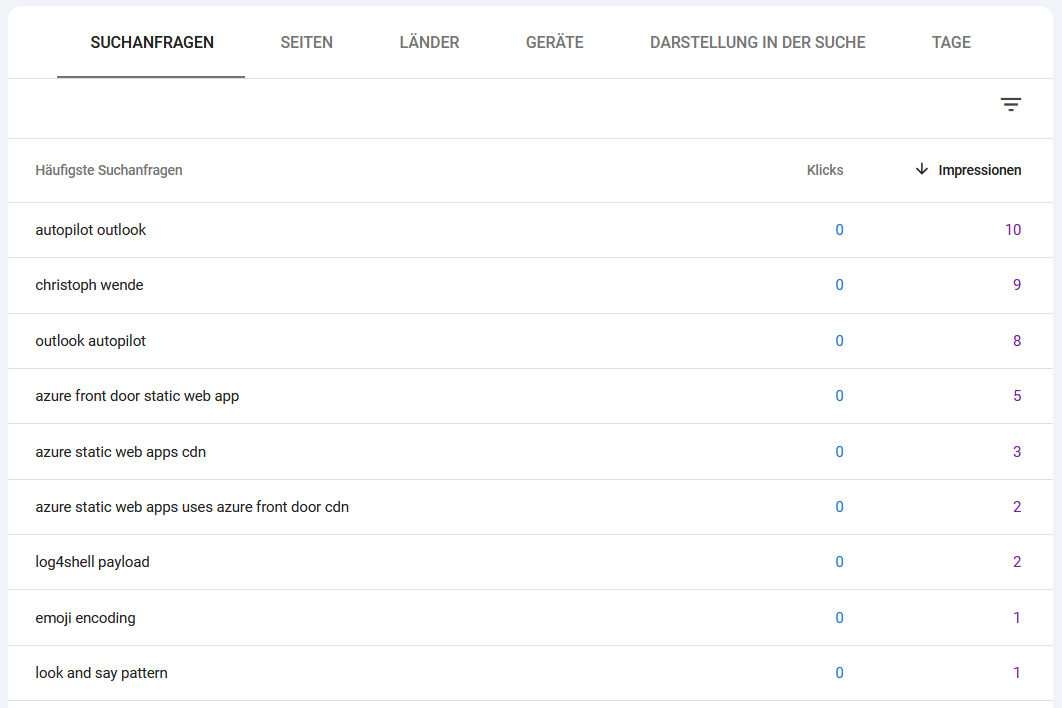

Query-level visibility

The query table shows which topics Google is experimenting with.

One interesting observation is that visibility did not concentrate on the newest article.

Instead, impressions were distributed across several older posts, covering very different technical topics.

For a small personal blog like mine, this suggests that Google is testing individual articles independently, rather than ranking the site as a whole.

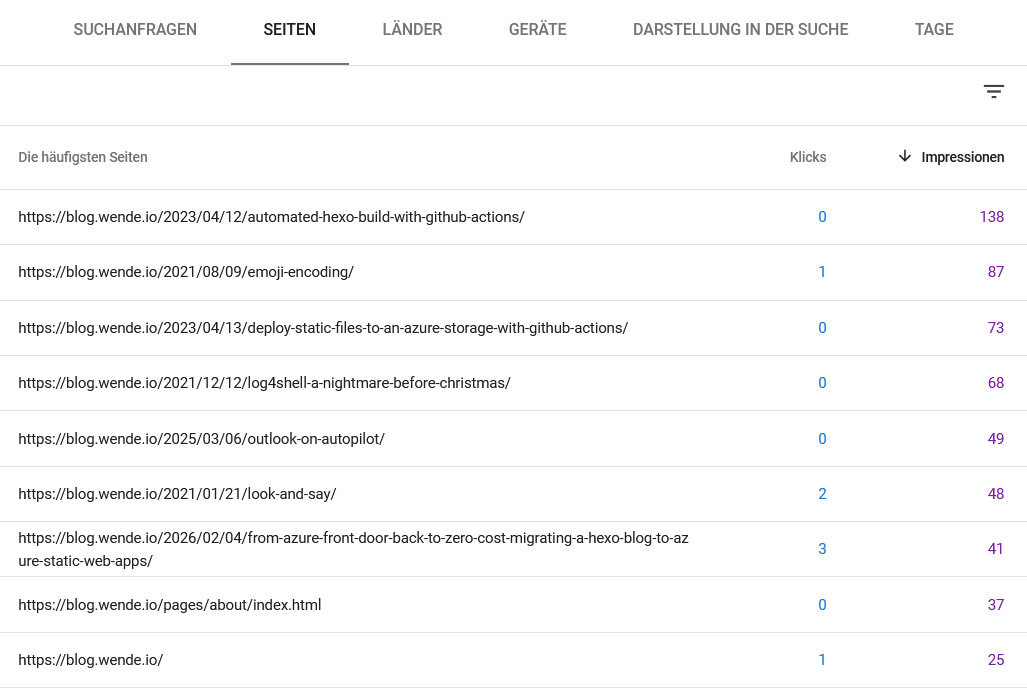

Page-level effects

Switching the report from queries to pages shows which articles actually received impressions.

Two examples illustrate the difference between impressions and clicks.

Example: impressions without clicks

The article Automated Hexo build with GitHub Actions generated the highest number of impressions during the observation window (around 138 impressions), but received no clicks.

This usually indicates that Google has started testing the page in search results, but the ranking position is still too low to produce traffic.

This behaviour is typical during early indexing experiments.

Example: impressions with clicks

A different pattern appeared for the article From Azure Front Door Back to Zero Cost – Migrating to Azure Static Web Apps. This page generated both impressions and a small number of clicks.

While the numbers are still small, it suggests that the article matches a specific search intent and that the snippet aligns reasonably well with the queries where it appears.

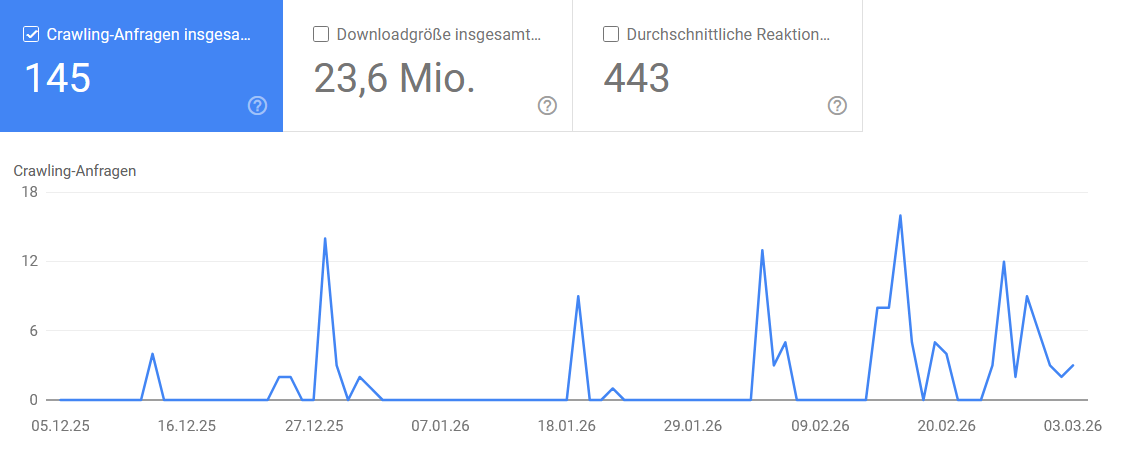

Crawl activity

Search Console crawl statistics also show consistent crawler activity.

Over the last 90 days Googlebot requested roughly 145 pages.

For a small static blog with fewer than twenty articles, this indicates that the crawler revisits the site regularly.

What the Google Search Console data suggests

Four weeks is still a very short observation window for search engines. The data does not show a finished result yet, but it does suggest that something has started to move.

The site appears to have shifted from being mostly invisible to being included in early ranking experiments. Several articles are now receiving impressions, a few have generated their first clicks, and Googlebot is visiting the site regularly.

While the numbers are still small, they are encouraging signals. They indicate that the content is at least being considered and tested in search results.

What remains unclear is whether this visibility will stabilize, improve further, or disappear again once Google’s ranking experiments settle.

To get a clearer picture, I plan to revisit the data again in about two months, once search visibility has had more time to stabilize.

Disclaimer

The interpretations in this article about SEO behaviour, ranking signals, and search engine decision processes are not based on formal expertise. Most of the explanations and hypotheses were generated with the help of an AI assistant.

They should therefore be understood as informed guesses rather than verified facts. They reflect what was suggested to me and how I interpreted the data available in Google Search Console.

In the absence of better knowledge, this is the model I currently use to reason about what might be happening. As with most things related to search engines, parts of it may be wrong.